I am an AI researcher at Samsung Advanced Institute of Technology (SAIT). I received my M.S. in Electrical Engineering from POSTECH, where I was advised by Prof. Tae-Hyun Oh.

My research focuses on generative AI and multimodal large language models, spanning laughter understanding, video reasoning, speech-to-3D talking head generation, and music generation. More recently, I have been particularly interested in LLM agents for scientific discovery, including post-training, multi-agent systems, and traceable reasoning for forward and inverse design, with a focus on reward design for RL.

News

| 2026 | Our paper on multimodal laughter reasoning, SMILE-Next, is accepted to ACL 2026 Oral. |

|---|---|

| 2025 | Our paper on a rejection sampling for LLM-based materials discovery appears at AI for Science @ NeurIPS 2025. |

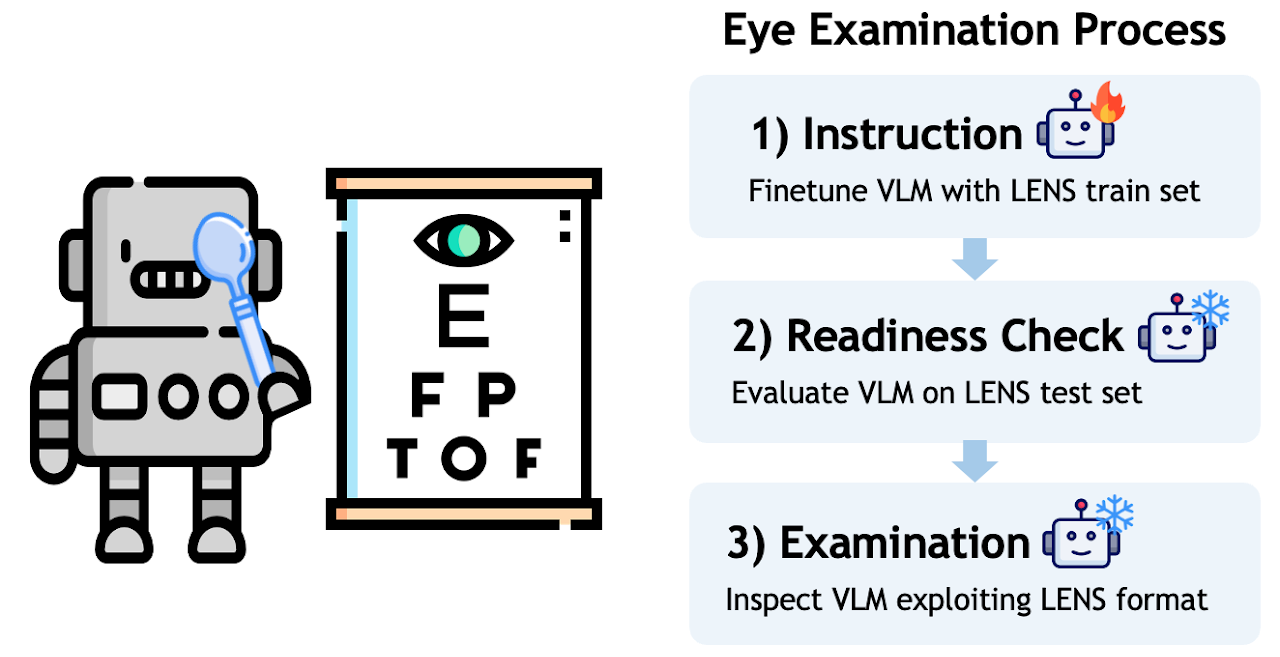

| 2025 | VLM's Eye Examination is published in TMLR 2025. |

| 2025 | Our paper on 3D face mesh video reconstruction is invited to ICLR 2025 from TMLR. |

| 2024 | I joined Samsung Advanced Institute of Technology as an AI Researcher. |

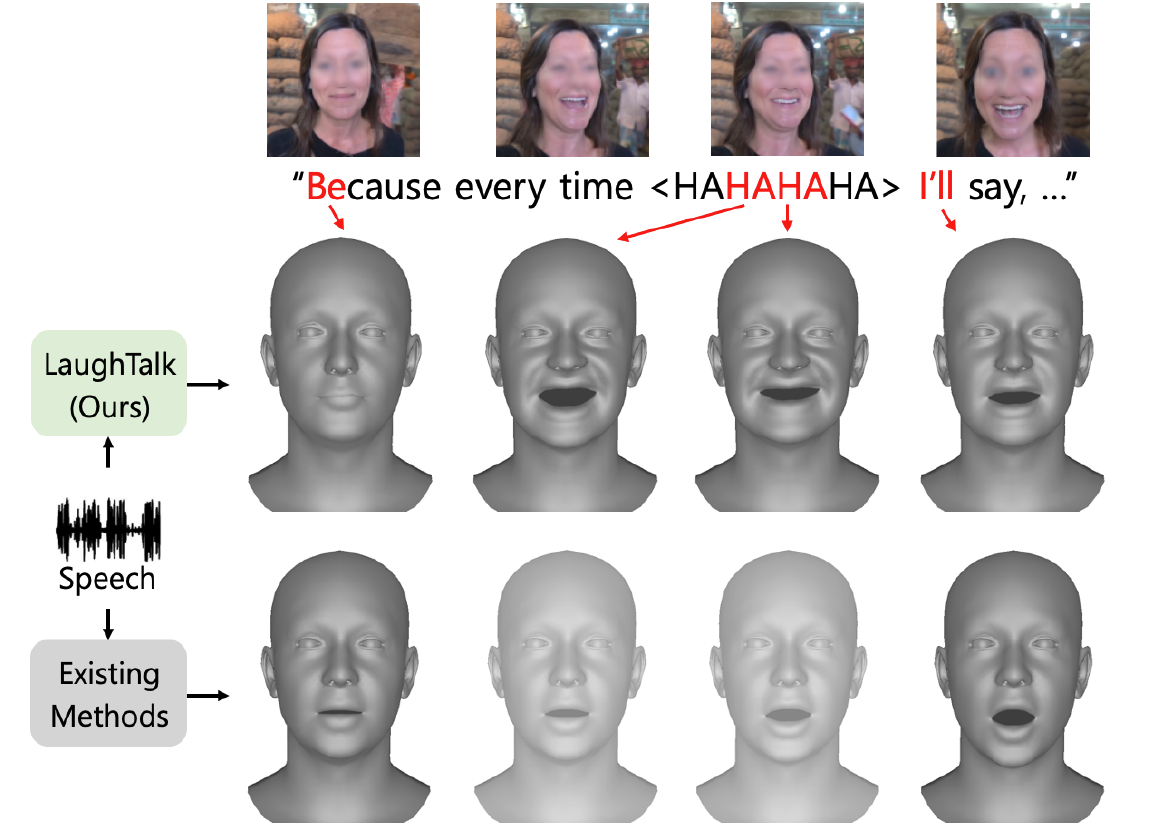

| 2024 | SMILE is accepted to NAACL 2024, and LaughTalk is accepted to WACV 2024. |

Selected Publications

-

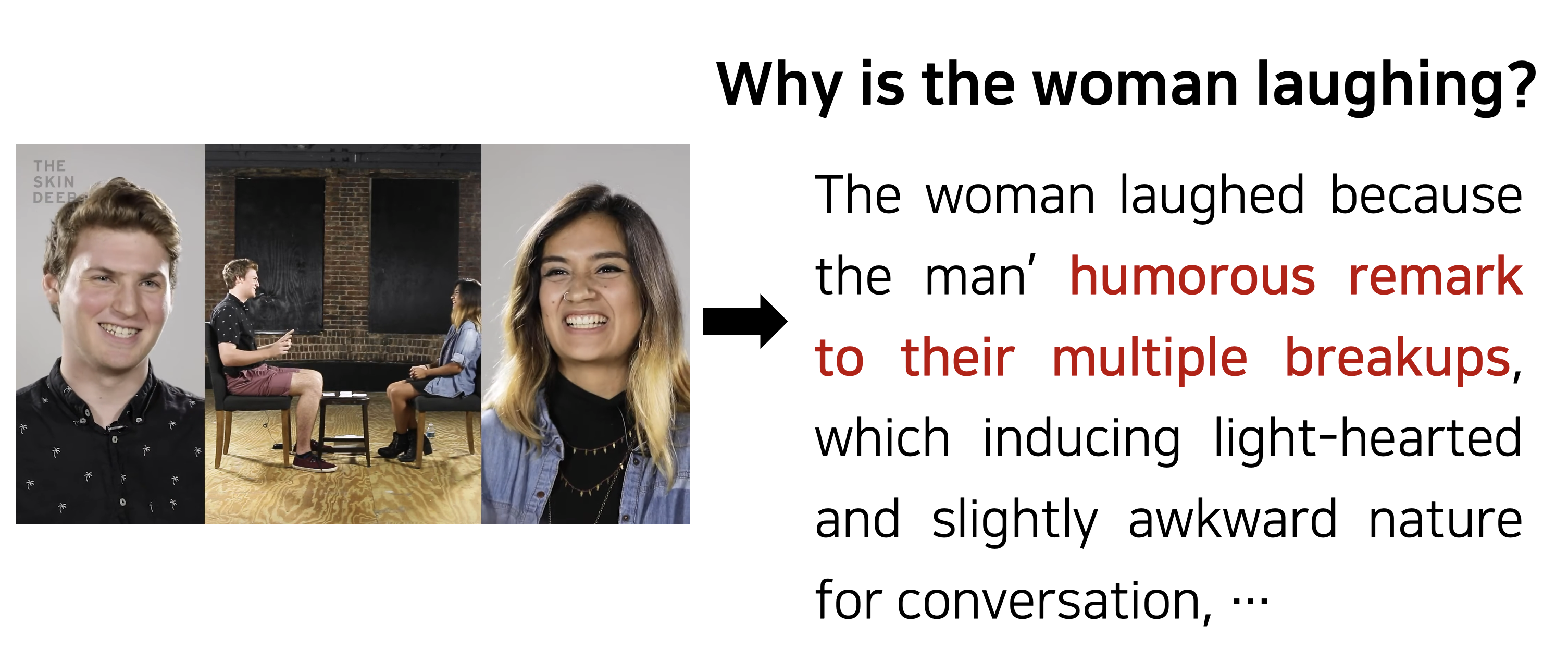

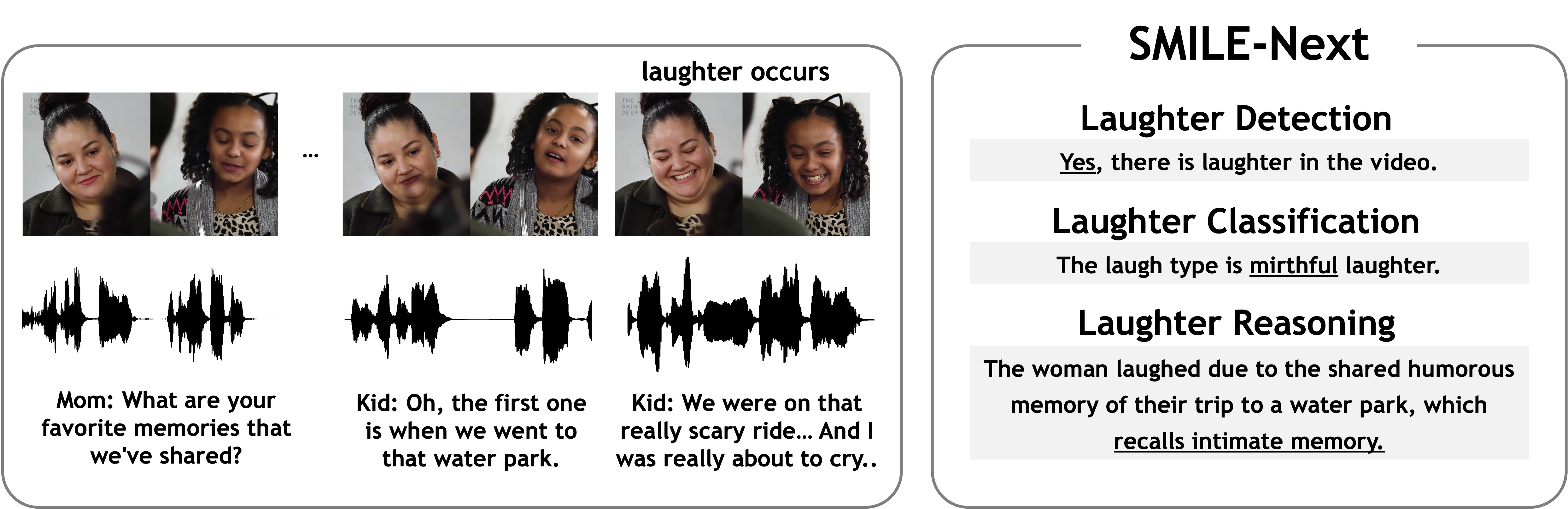

SMILE-Next: Teaching Large Language Models to Detect, Classify, and Reason about LaughterAnnual Meeting of the Association for Computational Linguistics (ACL), 2026 Oral

SMILE-Next: Teaching Large Language Models to Detect, Classify, and Reason about LaughterAnnual Meeting of the Association for Computational Linguistics (ACL), 2026 Oral -

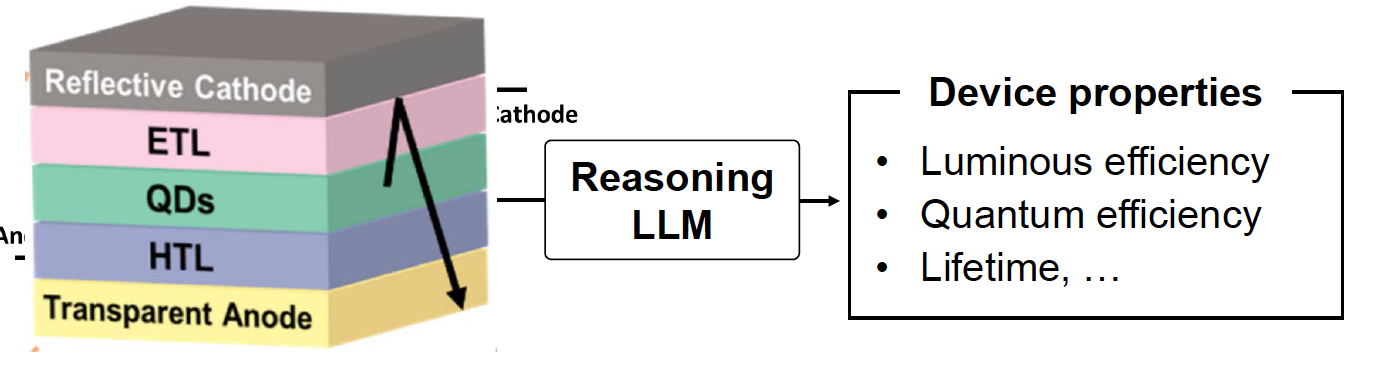

Aligning Reasoning LLMs for Materials Discovery with Physics-aware Rejection SamplingAI for Science Workshop at NeurIPS, 2025

Aligning Reasoning LLMs for Materials Discovery with Physics-aware Rejection SamplingAI for Science Workshop at NeurIPS, 2025 -

VLM's Eye Examination: Instruct and Inspect Visual Competency of Vision Language ModelsTransactions on Machine Learning Research (TMLR), 2025

VLM's Eye Examination: Instruct and Inspect Visual Competency of Vision Language ModelsTransactions on Machine Learning Research (TMLR), 2025 -

LaughTalk: Expressing 3D Talking Head Generation with LaughterIEEE/CVF Winter Conference on Applications of Computer Vision (WACV), 2024

LaughTalk: Expressing 3D Talking Head Generation with LaughterIEEE/CVF Winter Conference on Applications of Computer Vision (WACV), 2024 -

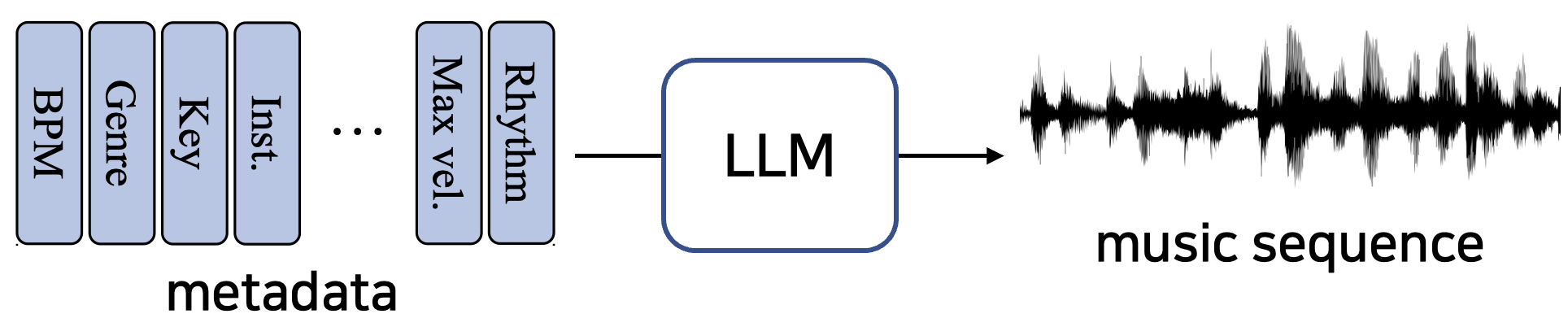

ComMU: Dataset for Combinatorial Music GenerationConference on Neural Information Processing Systems (NeurIPS), 2022

ComMU: Dataset for Combinatorial Music GenerationConference on Neural Information Processing Systems (NeurIPS), 2022

Work Experience

| 2024 - Present | AI Researcher, Samsung Advanced Institute of Technology (SAIT) |

|---|---|

| 2022 - 2024 | Graduate Researcher, POSTECH AMI Lab |

| 2021 - 2022 | Machine Learning Engineer, POZAlabs |

| 2017 - 2018 | Military Service, Republic of Korea Army, Vanguard Unit, discharged as sergeant |

Teaching and Mentoring

| 2024 | Instructor, Introduction to LLM, Fastcampus |

|---|---|

| 2021 - 2025 | Teaching Assistant, Naver Boostcamp AI Tech |

| 2022 - 2023 | Teaching Assistant, NLP and speech synthesis courses at Samsung Software Academy For Youth |

| 2022 | Teaching Assistant, Computer Vision (EECE 490) at POSTECH |

| Mentoring | JungMok Lee, now a Ph.D. student at KAIST, collaborated on visual LLM reasoning work accepted to ACL 2026 |

Professional Services

| Conference Reviewer: CVPR, ICLR, NeurIPS, ACL, ICML, ICCV, AAAI |